Background

If you follow press about the technology industry you see daily talking points about the rise of artificial intelligence and how it will reshape industries and competitive landscapes. At Serverless Solutions we fundamentally believe that to be true, but we have clients that still have what you might consider rudimentary use cases on data and data consumption. Let’s call it reporting.

How you do reporting has changed a little in recent years. Traditionally you might have invested a lot of time developing a data warehouse or operational data store then added reporting capabilities to it. Those are not bad investments even today, but most businesses and users will not wait months and years for that data to be available to them. As a result we have seen the rise of powerful visualization and data consolidation tools like Tableau and PowerBI that enable power business users to do things with data that were previously the domain of IT.

Google has a powerful answer to this new set of expectations and tools in the marketplace. I say powerful because there is power in simplicity. Google has done an amazing job of making their services simple and approachable. But behind them are amazing things happening that allow their users to develop and deploy quickly and scale to mind-boggling proportions if needed.

I call these services from Google ‘serverless’. What we are really saying when we use that term is Google is managing the provisioning and deployment for you at run-time allowing you as a creator to focus on creating things rather than managing them.

Leverage GCP for a serverless data warehouse

Assumptions

Before we dive into specific services, let’s talk about infrastructure vs platform services. In the cloud world you hear about Infrastructure as a service (IaaS), Platform as a service (PaaS) and Software as a Service (SaaS) and recently things like Serverless or Function based infrastructure (FaaS).

The services we will discuss in this paper occupy the serverless and/or SaaS space. There are many advantages to this:

- You do not need to worry about infrastructure. You can focus on creating and trust the cloud provider to deploy and scale your creation based on need.

- The services are usually cheaper to operate because you are relying on the cloud provider to abstract administration and management away from you.

- The services are usually cheaper to consume because the cloud provider is allocating resources as you need them rather than keeping resources idle ‘in-case’ you need them

Restating the above – The biggest assumption we are making is this is a cloud based, fully managed (aka serverless) infrastructure. I am explicitly stating this again because this differs from what you see from tools like Tableau that work as a desktop tool or an open source deployment via a container of something like Apache-Drill.

Combining 3 simple services to provide powerful reporting capabilities

Our end goal is to provide the following for our clients:

- Powerful / Nice looking reports

- BI self-service via reports or standard SQL for advanced users

- No infrastructure / managed infrastructure

- Low Cost

To achieve those goals we will talk about combining 3 simple services from Google Cloud Platform.

- Cloud DataPrep – Think of this like a graphical interface to build what we might have traditionally called ETL (Extract Transform & Load) services. Under the covers, this actually becomes a Cloud Data Flow job, but Google abstracts the development of what might normally be python or java code and generates it for you. Once complete, you can schedule these jobs, run them as batch or event based and you can point them at multiple destinations. In our scenario, we will point it at BigQuery.

- BigQuery – The best definition I have seen of BigQuery is a “managed data warehouse”. I think of it as the PaaS version of a database. Only it is optimized for extreme scale and use like a data warehouse (fast reads, rather than writes). The BigQuery interface uses standard SQL if a power user or developer wants to interact directly with the data. For this use case, we are using BigQuery as a data source and will do our query development dynamically via DataStudio.

- DataStudio – After data is landed in BigQuery, you can connect to it via DataStudio. DataStudio is good for ‘pretty reporting’ as well as allowing power users to dynamically explore and blend data.

Let’s take a tour of each of the services above in the order we would put this design pattern to use.

Cloud DataPrep Tour:

My first interactions with Cloud DataPrep were frustrating and I abandoned working with the service. Initially I did not like that:

- I had to agree to terms allowing someone other than Google to see my data (Trifecta)

- It abstracted things away from me that I wanted to control

The reality is I did not fully appreciate the assumptions above: This was a cloud managed service and I was forcing a different approach into a tool that did not make sense.

I revisited the service because a client gave me a requirement and a timeline that was very aggressive and I needed some help. I did not have time to struggle though Python and Pandas data wrangling. I forced myself to use Cloud DataPrep and I am glad I did. After getting more comfortable with it, I found it to be what I have needed for a long time when it comes to data cleaning and preparation. This quote from the Google site captures my thoughts well:

“Cloud Dataprep automatically detects schemas, datatypes, possible joins, and anomalies such as missing values, outliers, and duplicates so you get to skip the time-consuming work of profiling your data and go right to the data analysis”

So, it makes a guess at what I want. There are some tools that I really dislike because they are so poor at making good recommendation or are difficult to change once they make a recommendation. Cloud Data Prep seemed reasonably good at guessing what I wanted and when it was wrong, it was easy to change and see my changes side-by-side with what was previously suggested.

This video by Google does a great job of providing an overview of Cloud Data Prep.

Basic flow when using Cloud Data Prep

Using the diagram above we can talk about the highlights of using Data Prep by activity.

Service Highlights:

Data Visualization & Exploration: When you are working with data in Cloud DataPrep, the tool is constantly showing you distributions and things about your data. If I were wrangling data in Python, I might be doing histograms or using packages like missingno to ‘see’ what my data looks like and explicitly setting data types. That equates to lines of code and manipulating the code until it shows and does what I want. Cloud DataPrep makes very intelligent guesses about your data and shows it to you in real time.

In the graphic above, Data Prep is showing distributions for data and it has made some guesses at the type of data and the format it should be in. Note the icon at the left of Phone_Number column. Data Prep thinks this column is a phone number and has applied a data type and a data mask to it. It is also showing me that in this sample (more on samples later), 19 of the records do not fit the data type or mask. If I do not like the guesses Data Prep made for me, it is simple to change with a few clicks into sub menus. My changes become what it calls a ‘recipe’ . A recipe represents all of my changes to the data and is what will later be compiled to a Cloud DataFlow job on my behalf.

Data Manipulation (Recipe Development):

Data Prep has a powerful set of actions you can execute on your data and these actions work well on structured and unstructured data. The toolbar below shows the top level functions like merge or split and each of these has a subset of functionality that supports them.

These actions turn into recipes and can be sequenced to accomplish complex data wrangling activities.

Samples:

To speed development, you can point Cloud DataPrep at a large set of data and it will intelligently sample that data so that you are building your recipes on a smaller data set. This allows for a responsive interface and quick prototyping.

Fully Managed (Run Job/Land Data):

This is the magic part. A fully managed service. When you are happy with your recipe and how your data should look, you run it as a job. You can set this job to run as a batch or based on an event (like a scheduled time or appearance of a file). You also tell it where the data should go. In our pattern, we are going to land it in BigQuery which we will discuss later. When you run the job, some powerful things happen. Data Prep compiles your recipe(s) into code and deploys and manages them on your behalf. This means your Cloud DataPrep job becomes a Cloud DataFlow job. We will not discuss DataFlow, but think of DataFlow as the service you would write code in to do ETL. Cloud DataPrep is generating this code and deployment for us.

Looking at the graphic below we can peek into what is happening behind the scenes when we run a job. Cloud DataPrep is doing the transformations in the recipe and scaling up ‘workers’ based on need. This is the fully managed part. I am not worrying about scaling up or down any resources.

If you are like me, I was nervous the first time I saw my job spin up 100 workers to accomplish what I thought was a simple task. I was thinking, ‘geesh – what is this going to cost’. As it turns out, it is very cheap.

Service Cost:

Cloud DataPrep will change pricing at the end of 2018. We will use the proposed pricing for this document. The pricing will vary by region, but right now the service seems to be limited to the central US region (Iowa). The pricing will be $0.6 per Data Flow worker per hour.

For example, if the job above used 8 workers for an hour the cost would be:

1 hour * $0.60 * 8 vCPUs = $4.80

You can monitor this cost and estimate it from the jobs menu.

Side Note: From an architecture perspective, this type of pricing indicates you should look at an initial batch load then do incremental changes from there rather than what is often referred to as kill-and-fill.

BigQuery Tour:

Now that we have cleaned, transformed and loaded data, let’s look at where that data has landed. For Google centric overview, this video is a great start to get oriented.

BigQuery is one of the more exciting services in Google Cloud Platform. There is parity developing across the 3 primary public cloud providers in many areas, but this is one area that Google is years ahead of Amazon and Microsoft. My fear for Big Query is that it is a successful service and as a result Google is investing heavily in it. That sounds odd, but recently it has been taking on many new features like inline Machine Learning. These features are interesting, but in my opinion the thing that makes BigQuery so compelling is that it is a fully managed data warehouse with a simple interface (SQL) that operates at any scale you want to throw at it. I hope that does not get lost in the features and functions.

Let’s look at some of the fundamentals of BigQuery.

Simple SQL Interface

BigQuery has a simple, web based interface. You can look at public and private (your) datasets and interact with them via standard SQL. See the interface below.

Separation of Storage and Compute

One of the more innovative things about BigQuery is the pricing model. There are 2 levers for cost in BigQuery.

- BigQuery Storage – You can think about BigQuery Storage like another storage bucket or file system. When we were discussing Cloud DataPrep earlier, I had my Cloud DataPrep job land the data in BigQuery Storage. To do that, all I needed to do was set-up a data store, which is really as simple as naming a folder then point my Cloud DataPrep job at that location.

- BigQuery Compute – This is the innovation. You are charged for the data that is processed via a query, not the resources consumed. Add in the fact that BigQuery caches queries and you are not charged for cached results, you can have a very cost effective data warehouse if you are serving up canned reports.

Planet Scale Infrastructure

The first time I talked about BigQuery with a Google architect, I was surprised at the scale BigQuery can operate. We were discussing a client moving an on-prem Hadoop infrastructure to the cloud. Without much experience on the topic I suggested we move them to Cloud DataProc. The Googler asked what the use case was and after hearing it said, this might sound strange but BigQuery can easily handle that workload (100s of TB of data in this case) and will be cheaper and easier to manage. After discussing it more, I don’t know if there is a limitation on the size of data you can throw at BigQuery and am now of the opinion that Hadoop/DataProc are really for backwards compatibility and you should not be developing new things on them. I am sure someone that is a Hadoop expert and not a BigQuery fan boy like me can educate me otherwise.

Data Studio Tour:

Now that we are visualizing data, you can use tools like Tableau or PowerBI in this architecture, however, there are a few compelling things about DataStudio I want to highlight here. It is a data visualization tool but I found it to be more than just that and with some attributes that make it worth considering before you drop money on a per user tool from a competitor. Some of the service highlights:

Cost:

Google Cloud does not highlight DataStudio in their services portfolio, but it is a powerful and cheap (FREE!) service. By comparison, similar tools like Tableau and PowerBI charge based on user counts as well as user types. DataStudio is not as powerful as some of those tools, but it does create great looking visualizations and is highly customizable… did I mention it is FREE!

Data Exploration:

One of the things I did not use extensively, but I could appreciate if I was a power user was the ability to take data exploration through a process with seamless integration. For example, if you are exploring data in BigQuery via SQL and want to look for insights in your query you can click on EXPLORE IN DATA STUDIO.

This takes my results and exports them to the Explorer view in DataStudio. From there I can analyze my data to find insights and even create visualizations. Once I have something that is interesting I can export that to a DataStudio report to share with others and include in other visualizations. This is all integrated and seamless from a user experience. No copy and paste, no moving files around, just progressing through exploration of the data to a final product that I can share with others. I have found myself doing this process with tools like Excel where I might pull data from a database and save the results as a csv, load that csv to a spreadsheet, create workbooks and formulas to do some analysis… then share my spreadsheet. Doing a similar process with DataStudio was liberating and refreshing compared to the minutia I would have had to manage doing data exploration in Excel.

Visualization

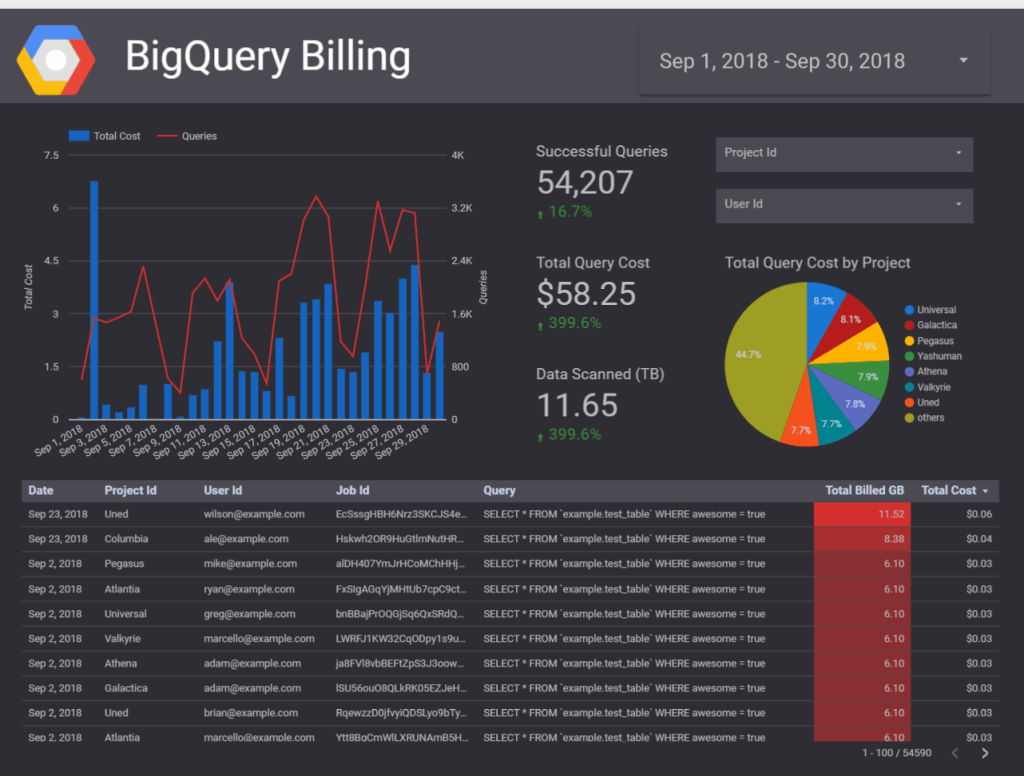

DataStudio does the basics well. Visualizations are clean and you can do just about anything with it. I pulled a few images of reports from the Data Studio Gallery to show how it can be used to visualize data.

To tell stories…

To interact with large data sets…

To create professional visualizations…

The visualizations are nice looking and I was able to develop my own rather quickly. There were some things that took a little getting used to. For example, DataStudio guesses at what you want to visualize when you drop a control (for example a pie chart) onto your report. The guesses were pretty good, but I found myself changing them frequently. Despite it being a web based report designer, it was very powerful and customizable (as you can see from a few of the Gallery shots).

Collaborating:

This web based authoring becomes a strength when it comes to deployment time. I found it very easy to share and collaborate on reports with peers once I had a report developed. I could control what people did with the reports I developed and see their changes. This was seamless and refreshing compared to other experiences with tools where I had to deploy reports developed on my local machine in a disjointed process via the web.

Data Blending:

When working with data you will inevitably need to consider combining data from disparate sources. For example, weather data with store operations. There are multiple ways to accomplish this task. The ‘legacy’ way would be to go to the data layer and ‘join’ the data. Maybe in a table, a SQL statement or in a meta-data layer provided by the report visualization tool. DataStudio allows you to blend data in the visualization itself. You simply select the data you want to ‘blend’ and ask it to blend the data. The result will be a visualization that joins the data together. It is a simple and easy to use model for power users.

DataStudio Summary:

To recap what some of the powerful aspects of DataStudio are:

- Super Cheap – FREE!

- Data Exploration – Seamless integration between BigQuery, Explorer and DataStudio for power users

- Great looking visualizations

- Collaboration – Co-develop and share your insights with a simple interface

- Data Blending – Combine data in your visualization layer rather than data layer

Additional thoughts on pricing:

Taking this up a level to our overall architecture, there are some levers in this architecture when it comes to pricing. To recap what we discussed earlier:

- Cloud DataPrep: You are going to pay for the ‘workers’ required to transform your data

- BigQuery: You pay for your data at rest (BigQuery Storage) + the data processed in a query (BigQuery Compute).

- DataStudio: Currently free

To illustrate cost in a small sample, I loaded 3M rows of data (1.3GB) with some basic transformations on each row (using Cloud DataPrep). Stored it (In BigQuery Storage) and used DataStudio to query and visualize a report (consumed BigQuery Compute). That process to transform the data, store it and run several queries on it cost me $0.60.

There are a few things driving that cost down in my example:

- BigQuery does not charge for the first 10GB of data stored per month

- The first 1TB of data processed each month is free

Those two items are part of what Google calls their “always free” tier of services. So, basically for this small sample BigQuery usage was free and all I really paid for was DataPrep to manipulate and load my data into BigQuery.

Thoughts on minimizing cost:

- Using Cloud DataPrep for incremental data changes would consume less resources so the ‘kill and fill’ model in many demos might be easy to develop, but is probably not the best enterprise solution.

- For reporting scenarios, enable BigQuery caching. Cached queries are free and the nature of basic reporting is you will run the same queries repeatedly.

- You can use BigQuery for streaming data, but batch ingress to BigQuery is currently free, where streaming inserts are 0.01 per 200MB. We will talk about real-time data architectures in another whitepaper.

- In BigQuery Storage, after 90 days the price of storage drops by 50% for data that has not changed. Google is encouraging you to store your history with this pricing. There is no performance degradation or change in how you access the data.

Final note on cost – DataStudio is currently free and a very capable tool. This is a huge differentiator when comparing to offerings like Tableau and PowerBI. Both of those tools have different models and have a richer set of reporting capabilities. However, Data Studio is a solid visualization platform and for the cost blows the competition away!

Next steps

This paper scratches the surface of what is possible with these powerful ‘serverless’ services. Having this basic architecture in place allows you to consider more advanced things like using machine learning to predict outcomes or use advanced services like natural language processing to enrich and structure data you might not normally think is accessible to your analytics capabilities (call logs for example).

If you are evaluating business intelligence or analytics capabilities, the services from Google Cloud should be at the top of your list for consideration. Contact us today if you would like to dive deeper into your actual use cases and concerns. We would be happy to get you oriented and set-up to consume Google Cloud services.