2 min read

Demystifying Edge Computing: Part 4 – Analytics at the Edge, Fog and Cloud

Analytics at the Edge, Fog and Cloud We have spoken about edge, fog and to a much lesser degree cloud computing. Each of them have pros and...

Build intelligent, data-driven capabilities that turn raw information into insights, automation, and smarter decision-making across your organization.

Modernize, secure, and operationalize your cloud environment with solutions that strengthen resilience, reduce risk, and improve IT performance.

Deliver modern applications and connected IoT solutions that enhance operations, streamline workflows, and create seamless digital experiences.

High-impact IT project execution from planning to delivery, aligned with business goals and designed for predictable outcomes.

Structured change management and M&A support that helps teams adapt, reduce disruption, and successfully navigate complex transitions.

Cloud-first IT operations that streamline cost, strengthen security, and provide modern, scalable infrastructure for growing teams.

IoT, more specifically for this post, IIoT (Industrial Internet of Things) is probably the hottest buzzword today. The whole premise of IIoT is networked devices that are connected and interact and exchange data. In most cases, the networked device is also called an Edge Device. In IIoT an edge device is a device with network accessible actuators, sensors, and even moving assets (locomotives, cars, airplanes, ships, etc.).

Edge Computing is the ability to collect data (temporarily store it), filter, aggregate, and send data to the cloud or data center. Additionally, Edge Computing includes the ability to run analytics (make decisions) on the device without risking the latency of sending the data to the cloud for a decision and sending the decision back to the device for an action. This becomes VERY important when you factor in intermittent connectivity, low bandwidth (and/or high cost for additional bandwidth), immediacy of insights, and security related concerns.

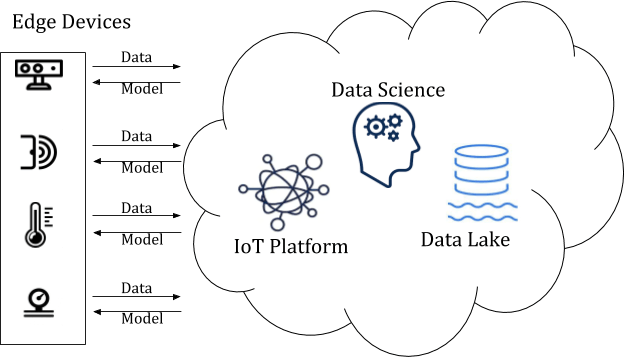

In the illustration above the following is happening:

Edge Device (connected sensors) are capturing Data

The data is filtered and aggregated at the Edge

Over the network, most likely using MQTT, a reduced pay load is sent to the IoT platform (analytics, KPIs, Rules, etc. can happen here)

The data is pushed to a Data Lake (cold storage)

Data Scientists build models that output insights and queue them back to the IoT Platform and/or device management software

The Iot Platform and/or device management software pushes the model back to the Edge Device (connected sensor)

Data is analyzed at the edge device (connected sensor) and an action is taken. For example: an anomaly with large catastrophic consequences is detected and the machine it is monitoring is shut down immediately

Do all edge devices have the ability to do all of the above? No, until recently (last 5 years or so) most devices haven’t been smart (meaning connected or having the compute power to do anything interesting). For these more limited devices we introduce a new term, Fog Computing. Fog Computing, defined by Wikipedia, as extending cloud computing to the edge of an enterprise’s network. Also known as edge computing or fogging, fog computing facilitates the operation of compute, storage, and networking services between end devices and cloud computing data centers. While edge computing is typically referred to the location where services are instantiated, fog computing implies distribution of the communication, computation, and storage resources and services on or close to devices and systems in the control of end-users. That is a mouthful to basically say Fog devices require a gateway for some level of compute and connectivity.

Fog Computing and edge computing sound very similar since they bring the intelligence and processing closer to the source. However, the key difference between edge and fog is the location of the intelligence and compute power. In a fog architecture the intelligence/compute power is on the gateway.

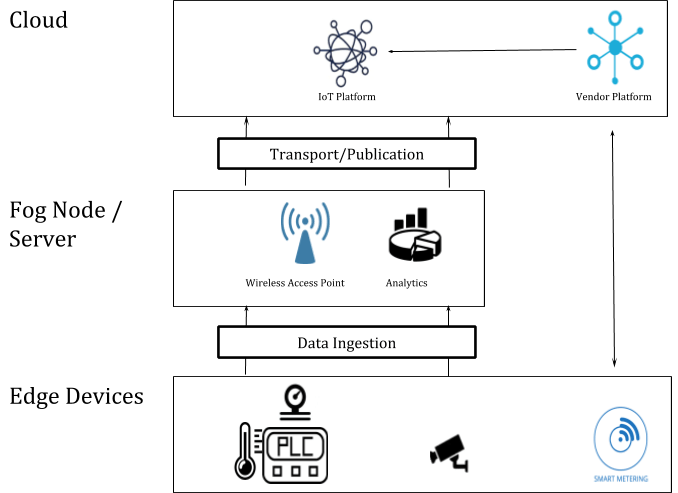

The illustration above shows the relationship between the Edge and Fog layer. This is a common architecture within IIoT because it allows for flexibility and scalability as it relates to analytics. As you can see, the further you get from the edge device the slower the response/processing time you will experience. The use case(s) should dictate where you do your analytics, as there are pros/cons for each layer. For example, doing analytics in the cloud results in the longest latency, but you will have the richest dataset to build and execute machine-learning algorithms on. In contrast, running analytics at the edge results in almost no latency, but the depth of the insights gleaned from analytics will be minimal in comparison due to the less rich dataset.

The following is an illustration that uses both Fog and Edge computing as part of its architecture.

In this example we are taking data off of a PLC, a surveillance camera and a smart meter:

The PLC is capturing several data points including temperature and pressure (for this example). For the sake of this example, let’s assume that it is communicating via OPC UA to the Fog Node/Gateway Server, which has software running.

The surveillance camera would operate in a very similar fashion, however, the big difference would be related to the amount of data that would be sent up to the IoT Platform, this would depend on bandwidth and use cases. For most picture/video related use cases you will want to do as much processing at the edge as possible for the following reasons:

In the illustration above, I also included a smart meter. For the purpose of this example, assume that this smart meter can be configured to sample data at a specified rate and rules can be configured to take action upon upper/lower thresholds, finally it is connected to the cloud wirelessly. Let’s also assume that the smart meter comes from a vendor that has a direct connection to it’s own cloud platform.

2 min read

Analytics at the Edge, Fog and Cloud We have spoken about edge, fog and to a much lesser degree cloud computing. Each of them have pros and...

2 min read

Edge Computing – Examples using Google Interestingly enough, edge computing typically is related to an IoT project; IoT solutions become Big...

1 min read

Device Management at the EDGE In our earlier post we’ve touched on this point a few times, so let’s dig a little deeper into device management. In...